The 8 best TestRail alternatives in 2026

By qtrl Team · Engineering

TestRail isn't the default choice it used to be. The general consensus across QA forums, review sites, and community Slacks is that the product no longer holds itself to the standards it once did. Buggy releases, slow support, and a thin AI story have worn down a lot of long-time users. Meanwhile, the test management space has more credible options than it did even two years ago, and the question we hear most often from QA teams now isn't whether to switch, but what to switch to.

Up front: we make qtrl, so we're a vendor writing about other vendors. We're not going to pretend that's neutral. What we'll do instead is tell you which tool fits which kind of team, where each one is genuinely strong, and where each one falls short, including ours.

We've covered why teams are moving on from TestRail in a previous post. This one is the next step: what to actually replace it with.

How to pick a TestRail alternative

Test management tools all look the same on a feature matrix. Test cases, runs, suites, Jira integration, reporting. The differences only show up once you start using one seriously. Before comparing logos, get clear on which of these matters most to your team:

- Where your tests live today. If your engineering org runs everything through Jira, a Jira-native tool will feel different than a standalone app. Both can work, but the daily friction is very different.

- Who needs visibility. If only QA looks at test results, you have one set of options. If PMs, developers, and compliance also need to see what's tested, your shortlist gets shorter fast (and licensing costs get bigger).

- Manual versus automated balance. Some tools were built around manual test cases and bolted automation on later. Others started with CI/CD as the default. Pick the one that matches where your team actually spends its time.

- How regulated you are. If you ship into healthcare, finance, or anything covered by the EU AI Act, audit trails and traceability aren't a nice to have. They're the whole reason you have a test management tool.

- How much AI you actually want. Some teams want AI to write and run tests for them. Others want a clean, predictable system and would rather AI stay out of the way. Both are valid. Know which one you are before you start demoing.

With that out of the way, here are the alternatives worth your shortlist, grouped by the kind of team they fit best.

TestRail alternatives compared at a glance

Before we get into each tool, here's how the eight stack up on the factors most teams care about when replacing TestRail.

| Tool | Best for | Jira-native | AI features | Compliance depth |

|---|---|---|---|---|

| Qase | Clean TestRail swap | No | Moderate (catching up) | Basic |

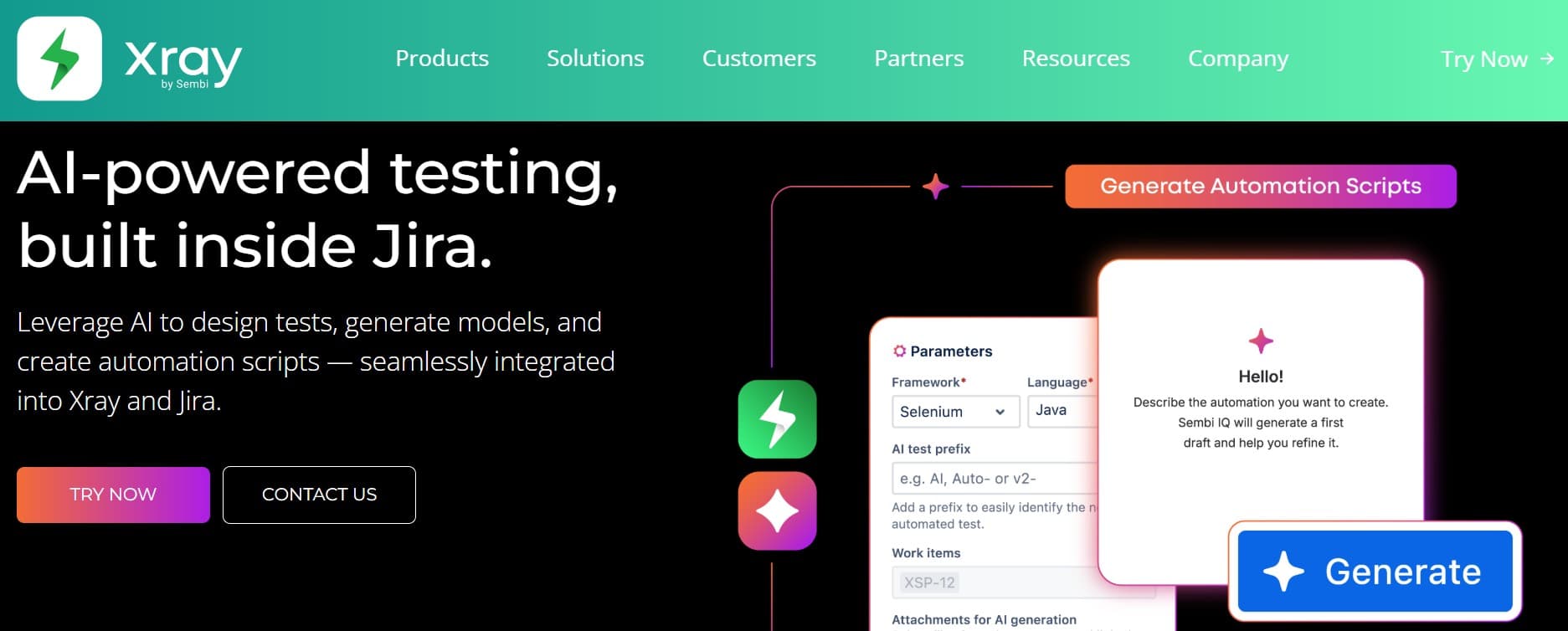

| Xray | Jira teams, BDD-heavy | Yes | Limited | Strong |

| Zephyr Scale | Enterprise Jira orgs | Yes | Limited | Strong |

| qTest | Large regulated QA programs | No | Moderate | Strong |

| PractiTest | Mid-size regulated teams | No | Limited | Strong |

| Allure TestOps | Automation-heavy teams | No | Limited | Moderate |

| Testiny | Small teams, simple apps | No | Limited | Basic |

| qtrl | AI-native test management | No | Native (agents + authoring) | Strong |

Jira-native test management: Xray vs Zephyr Scale

If your developers, PMs, and QA all work in Jira every day, the calculation usually comes down to Xray or Zephyr Scale. Both are mature, both are owned by big vendors (Xpand IT and SmartBear respectively), and both treat test cases as first-class Jira issues. That means everyone with a Jira seat can see them, link them, and comment on them without buying another license.

Xray tends to win on flexibility. It supports Cucumber and BDD natively, has a strong REST API, and handles large test repositories without slowing Jira to a crawl. The reporting is decent out of the box. The downside: the UI inherits all of Jira's quirks, and the learning curve for new users is steeper than a purpose-built test app.

Zephyr Scale (formerly TM4J) is the more polished option of the two. Test case organization is cleaner, the cross-project reporting is stronger, and the Jira integration feels less bolted on. It's a good fit for enterprise programs that need traceability across many teams. The trade-off is price. Zephyr Scale at scale is not a small line item.

Both share one limitation: if you ever decide to leave Jira, your test management leaves with it. That's fine if Jira is non-negotiable in your org. It's worth knowing if it isn't.

Modern TestRail replacements: Qase and Testiny

These are the two tools we hear most often when teams say "TestRail, but it doesn't feel like it's from 2014."

Qase is the most direct TestRail replacement on the market. The data model is familiar, the import tooling is solid, and the UI is genuinely pleasant. It has a free tier that's usable for small teams, real CI integrations, and a public API that doesn't feel like an afterthought. Qase has been adding AI features (test case generation, defect analysis), though as of early 2026 they're still catching up to the AI-native tools. If your priority is "same workflow, better product," Qase is the safest bet on this list.

Testiny is newer and smaller, but worth a look if you value simplicity. It's opinionated, fast, and doesn't try to be everything. The trade-off is that it has fewer integrations and less of an ecosystem than Qase. For a small QA team that wants something that just works, it's a fair pick. For a 50-person QA org with complex compliance needs, it probably isn't the right size.

TestRail alternatives for regulated industries: qTest, PractiTest, Allure TestOps

Regulated industries have a different set of requirements. You need audit trails, role permissions that hold up to scrutiny, validated environments, and reporting that someone outside QA can actually read.

qTest (Tricentis) is the heavyweight option. It's built for large enterprise QA programs and has the depth to back it up: requirements traceability, audit history, integration with Tricentis's broader testing platform, and the kind of admin controls compliance teams expect. The cost reflects that, and so does the implementation effort. Don't pick qTest if you're a 10-person QA team. Do consider it if you're running a global QA program at a Fortune 500.

PractiTest sits in the middle. It's less heavy than qTest, more structured than Qase, and has a strong story around traceability and reporting. Teams in healthcare and finance often shortlist it for that reason. The UI isn't the prettiest in the category, but the substance is there.

Allure TestOps is a different kind of fit. It's built around the Allure reporting framework that many automation engineers already know, so if your team is automation-heavy and you live in Allure reports, the upgrade path is natural. It's less suited for manual-test-case-driven workflows.

AI-native test management with execution built in: qtrl

This is the part where we're biased, so take it with whatever sodium you need. But the category does exist, and it's worth understanding even if you don't pick us.

qtrl is two things at once. It's a structured test management system with the traceability, role-based access, and audit history that regulated teams need, and it's an agentic execution layer that can actually run tests against your product in a real browser. Most of the tools above are one or the other. The classic test management tools give you structure but leave execution to a separate Playwright or Cypress repo. The newer AI tools give you execution but don't plug into a real test management workflow with audit trails and approvals.

That combination matters more in 2026 than it did a year ago. The EU AI Act takes full effect in August, and teams shipping AI features need both sides: a test management system that holds up to audit, and a way to actually exercise non-deterministic behavior at scale. We've written about both pieces in The EU AI Act and QA and What is agentic testing.

Where qtrl fits best: teams that want structured, compliant test management without running a separate automation stack on the side, and teams that don't want to bolt AI onto a tool that wasn't designed for it. Where it doesn't fit: if you need a long shelf of legacy compliance certifications stacked up, the bigger enterprise incumbents have more paperwork to point at today.

Other TestRail alternatives worth mentioning

There are other names you'll see in any search: TestMonitor, TestCollab, aqua cloud, Tuskr, Kualitee, and a long tail of smaller tools. Most of them are fine. Most of them will do the job for a small or mid-size team. The reason they're not on the main list isn't that they're bad. It's that they don't solve a problem the bigger options don't already solve. If a free trial of one of them clicks for your team, that's a valid reason to pick it.

How to choose the right TestRail alternative

If you want a shortcut, here's the rough decision tree we'd use:

- Jira is the center of gravity in your org and won't change: shortlist Xray and Zephyr Scale. Pick Xray for flexibility and BDD, Zephyr Scale for polish and enterprise reporting.

- You want a clean, fast TestRail replacement and your workflow is mostly fine: start with Qase. It's the lowest-risk swap.

- You're in healthcare, finance, or anything regulated: qTest if you're large, PractiTest if you're mid-sized, Allure TestOps if you're automation-heavy, qtrl if you also want AI execution built in instead of a separate automation stack.

- You're tired of maintaining brittle automation and want structured test management with AI agents that actually run the tests: look at qtrl and the broader agentic testing category.

Frequently asked questions about TestRail alternatives

What's the best TestRail alternative in 2026? There isn't a universal winner. Qase is the safest direct replacement if you want a familiar workflow and a modern UI. Xray or Zephyr Scale are best for Jira-centric orgs. qTest and PractiTest lead for regulated enterprises. qtrl fits teams that want AI agents executing tests inside a real test management system.

Is Qase a good replacement for TestRail? Yes, for most teams. It has the most similar data model, solid import tooling, a usable free tier, and a UI that feels like something from this decade. Its AI features are still catching up to the AI-native tools, so if AI authoring is the main reason you're leaving TestRail, Qase probably isn't the right stop.

Which TestRail alternative works best with Jira? Xray and Zephyr Scale. Both treat test cases as Jira issues, so Jira users can see and link tests without a separate license. Xray wins on BDD and flexibility; Zephyr Scale wins on cross-project reporting and enterprise polish.

Are there free TestRail alternatives? Qase has a real free tier that works for small teams. Testiny's entry pricing is low. Most enterprise options (qTest, Zephyr Scale, PractiTest) don't have meaningful free tiers.

Can I import my TestRail data into another tool? Qase, Xray, Zephyr Scale, qTest, and PractiTest all have TestRail importers of varying quality. Run the import on a real messy project (not a demo dataset) before you commit. The gap between "supports TestRail import" and "handles your actual TestRail export cleanly" can be wide.

What others say about the alternatives

Before you commit, it's worth reading the public reviews of each candidate. Below are recurring complaints we've pulled from G2 and Gartner so you know what to probe in your own trial.

What others say about TestRail

“TestRail starts to feel slow and clunky once suites grow large or you run lots of configurations and concurrent users, and the UI still feels old-school compared to newer tools.”

G2 reviewer, Program Manager (Small-Business) · G2 reviews

“Support has been hard to reach for quick resolutions, billing and product logins are separate, and managing multiple projects is more painful than it should be.”

G2 reviewer, Computer Software (Small-Business) · G2 reviews

“It slows down with lots of test cases, runs, or users, and collaboration feels static next to modern tools because comments lack real-time team interaction.”

G2 reviewer, IT Manager (Mid-Market) · G2 reviews

What others say about Xray

“Xray is overrated and hard to work with. It is slow, lags on large test sets, and the UX is unclear.”

G2 reviewer, QA Team Lead (Mid-Market) · G2 reviews

“Xray does not prevent duplicate issues, lacks Slack integration, cannot report issues from email, and has no external dashboard.”

G2 reviewer, Junior Software Tester (Mid-Market) · G2 reviews

“The core concepts are complex for new users, the UI gets slow on large Jira projects, and bulk updates on big data sets are cumbersome.”

G2 reviewer, Lead SDET (Mid-Market) · G2 reviews

What others say about qTest

“The Azure Pipelines integration does not fully update test status, which limits how much you can trust the automated results.”

G2 reviewer, IT and Services (Mid-Market) · G2 reviews

“Advanced metrics and reporting feel clunky and workflow customization is limited.”

G2 reviewer, Functional Tester (Mid-Market) · G2 reviews

“qTest handles mainstream test management but lacks newer AI-era capabilities such as self-healing tests, and AI-generated cases still need substantial manual cleanup.”

Gartner reviewer, Software Developer in IT Services (1B–10B USD) · Gartner Peer Insights

What others say about Zephyr Scale

“The UI is initially confusing, integrations sometimes need better syncing, large test cases can be slow, pricing feels high for smaller teams, and support can be delayed.”

G2 reviewer, Manual Tester (Enterprise) · G2 reviews

“Reliable overall, but reporting and performance are areas needing improvement, and large repositories can feel slow.”

Gartner reviewer, Engineering Manager in IT Services (500M–1B USD) · Gartner Peer Insights

“Customer support has felt overwhelmed and slow. Open tickets have aged four months or more without resolution.”

Gartner reviewer, Manager of IT Services in Telecommunications (10B+ USD) · Gartner Peer Insights

What others say about PractiTest

“The interface can feel slow, report customization is confusing for new users, and bulk test-case import is difficult.”

G2 reviewer, Associate Test Engineer (Mid-Market) · G2 reviews

“Cloning across projects does not copy templates, some filters behave incorrectly after redirects, and there is little audit history around requirements-to-tests link changes.”

G2 reviewer, Software Tester (Enterprise) · G2 reviews

“Advanced features come with a learning curve, some actions take more clicks than expected, and report generation can lag.”

G2 reviewer, QA and Test Manager (Small-Business) · G2 reviews

What others say about Qase

“Qase’s AI assistant makes step editing unpredictable. Deleting a step also deletes pauses, the AI can regenerate previously removed steps, and there is no way to lock steps or manage them in bulk.”

G2 reviewer, QA Engineer (Mid-Market) · G2 reviews

“Qase becomes less smooth on large test suites, especially around filtering and navigation, and the reporting is too limited for richer custom insights.”

G2 reviewer, Software Engineer (Mid-Market) · G2 reviews

“Some features are not clearly laid out at first and the workflow takes time to feel natural.”

G2 reviewer, CRM Developer (Mid-Market) · G2 reviews

What others say about Testiny

“Testiny cuts off information on small or low-resolution screens and you cannot launch a run directly from a parent folder containing child test folders.”

G2 reviewer, QA Engineer (Small-Business) · G2 reviews

“Importing more than around 6,900 test cases triggered an API rate limit, which is meaningful friction for larger migrations.”

G2 reviewer, Director of QA (Small-Business) · G2 reviews

“Still a newer player. Missing features like milestones and some integrations, and the API docs lack ready-made language wrappers.”

G2 reviewer (Enterprise) · G2 reviews

Things to do before you sign anything

Two things actually move the needle when evaluating a test management tool, and most teams skip both.

The first is to run a real migration on a real project. Not a demo dataset, not a sales-curated import. Pick one of your messier active projects, with the broken links, the half-orphaned test cases, and the run history that goes back too far, and bring it across. You'll learn more in two hours of that than in five sales calls. The tools that handle the mess gracefully are the ones that will handle yours next year.

The second is to put it in front of someone who isn't in QA. Walk a developer or a PM through the tool and ask them to find the test results for the feature they shipped last week. If they can't do it without help, or without a license they don't already have, you haven't solved the visibility problem you came in to solve. This is the failure mode that quietly killed TestRail for a lot of teams, and it's worth checking before you swap one version of it for another.

If structured, compliant test management with agentic execution built in is on your shortlist, qtrl was built for exactly that: the audit trail and traceability of a real test management system, plus AI agents that run tests against your real product instead of a recording. Try it out and see how it fits next to whatever else you're evaluating.

Have more questions about AI testing and QA? Check out our FAQ